XML Is Making a Comeback in Prompt Engineering — And It Makes LLMs Better

I’m Siddhesh, a Microsoft Certified Trainer, cloud architect, and AI practitioner focused on helping developers and organizations adopt AI effectively. As a Pluralsight instructor and speaker, I design and deliver hands-on AI enablement programs covering Generative AI, Agentic AI, Azure AI, and modern cloud architectures.

With a strong foundation in Microsoft .NET and Azure, my work today centers on building real-world AI solutions, agentic workflows, and developer productivity using AI-assisted tools. I share practical insights through workshops, conference talks, online courses, blogs, newsletters, and YouTube—bridging the gap between AI concepts and production-ready implementations.

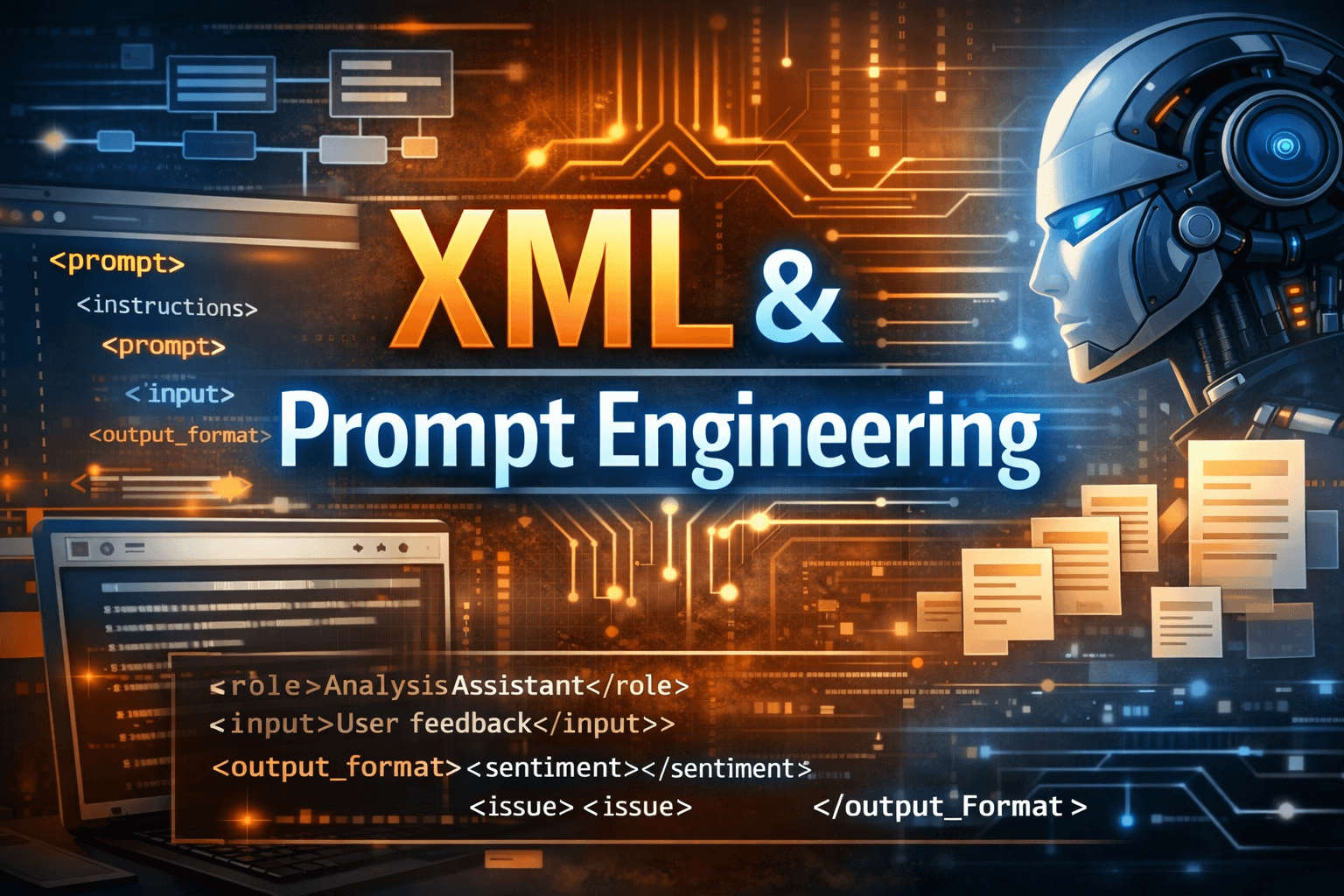

Introduction: Prompt Engineering and Its Evolution

Prompt engineering is the practice of designing inputs to Large Language Models (LLMs) in a way that reliably produces accurate, safe, and useful outputs. While early experimentation focused on natural language instructions alone, the field has matured rapidly. Today, prompt engineering borrows ideas from software engineering: structure, constraints, separation of concerns, and validation.

One of the most interesting developments in this evolution is the resurgence of XML-style structured prompts. Far from being obsolete, XML is re-emerging as a powerful way to improve determinism, interpretability, and reliability when interacting with LLMs.

This article explores:

What prompt engineering is and why structure matters

Common prompt engineering techniques

Why structured prompts outperform free-form prompts

How XML is being explicitly recommended by OpenAI, Anthropic, and Google Gemini

Practical, runnable examples using XML-style prompts

What Is Prompt Engineering?

At its core, prompt engineering is about communication: translating human intent into instructions that an LLM can reliably follow.

A prompt typically defines:

Role - what the model is supposed to be

Task - what it should do

Context - background knowledge or constraints

Constraints – rules and limitations

Output format - how the response should be structured

Early prompts often mixed all of this into a single paragraph. That approach works—until it doesn’t. As prompts grow in size and responsibility, ambiguity creeps in, outputs drift, and reliability suffers.

As models become more capable, prompts become less about clever phrasing and more about clarity, structure, and constraints.

Common Prompt Engineering Techniques

Before discussing XML, it’s useful to understand where it fits among established techniques.

1. Zero-shot Prompting

You ask the model to perform a task without examples.

Summarize the following text in 3 bullet points.

2. Few-shot Prompting

You provide examples to guide the model’s behavior.

Input: The app crashes on login.

Output: Bug

Input: Can you add dark mode?

Output: Feature request

Input: Page loads slowly.

Output:

3. Chain-of-Thought Prompting

You explicitly ask the model to reason step by step.

Solve the problem step by step and explain your reasoning.

4. Role-based Prompting

You assign an explicit persona or role.

You are a senior backend architect reviewing an API design.

These techniques remain valuable, but they do not address a deeper issue: how instructions, data, and constraints are separated and interpreted by the model.

That is fundamentally a structural problem.

Why Structured Prompts Produce Better Results

Free-form prompts rely heavily on the model’s interpretation of natural language. This introduces ambiguity:

Instructions blend with context

Output formats are inconsistently followed

Long prompts become hard to parse mentally and for the model

Structured prompts solve this by:

Clearly separating instructions, input data, and output constraints

Making intent explicit

Reducing prompt injection risks

Improving consistency across runs

This is where XML excels.

Why XML (and Not Just JSON or Markdown)?

You might ask: why XML instead of JSON, YAML, or Markdown?

XML offers the following key advantages in prompt engineering:

Explicit semantic boundaries

Tags clearly communicate intent: <instructions>, <input>, <constraints>, <output_format>.Hierarchical structure

XML naturally represents nested reasoning, workflows, and multi-agent orchestration.Model alignment

Modern LLMs are trained extensively on markup-like structures, including XML and HTML.Human and machine readable

XML remains easy to scan visually while being trivial to parse programmatically.

JSON is excellent for data exchange, but it is less expressive for instructions and reasoning structure. Markdown improves readability, but lacks strict boundaries. XML sits in a productive middle ground.

As a result, XML-style prompts are easier for models to follow — and harder for them to misunderstand.

XML in Prompt Engineering: Industry Recommendations

All major LLM providers now explicitly recommend structured prompting, often using XML tags.

- OpenAI

OpenAI documentation emphasizes clear separation of instructions, input, and output formatting. XML-style delimiters are recommended for complex prompts to improve reliability.

- Anthropic (Claude)

Anthropic explicitly recommends XML tags to:

Separate user content from instructions

Prevent prompt injection

Improve output consistency

- Google Gemini

Google Gemini documentation highlights structured prompting strategies to guide reasoning, formatting, and task decomposition.

The message is consistent: structure matters, and XML is a first-class tool for achieving it.

Example 1: Unstructured vs Structured Prompt

❌ Unstructured Prompt

You are a helpful assistant. Analyze the customer feedback below and classify sentiment and extract key issues.

The app crashes when I upload files and support is slow.

✅ XML-Structured Prompt

<prompt>

<role>You are a customer feedback analysis assistant.</role>

<instructions>

Classify the sentiment and extract key issues from the feedback.

</instructions>

<input>

The app crashes when I upload files and support is slow.

</input>

<output_format>

<sentiment></sentiment>

<issues>

<issue></issue>

</issues>

</output_format>

</prompt>

Why this works better

The model knows exactly what is instruction vs data

Output expectations are explicit

Results are easier to parse programmatically

Example 2: XML for Multi-Step Reasoning

<prompt>

<instructions>

Answer the question by following the steps in order.

</instructions>

<steps>

<step>Identify the problem</step>

<step>Analyze constraints</step>

<step>Propose a solution</step>

</steps>

<question>

How should we design a rate-limiting strategy for a public API?

</question>

<output_format>

<analysis></analysis>

<solution></solution>

</output_format>

</prompt>

This structure encourages disciplined reasoning without explicitly exposing chain-of-thought beyond what you request.

Example 3: XML for Agentic Workflows

<agent_task>

<context>

You are part of an autonomous code review agent.

</context>

<repository_language>Python</repository_language>

<objectives>

<objective>Detect security issues</objective>

<objective>Suggest performance improvements</objective>

</objectives>

<constraints>

<constraint>No code execution</constraint>

<constraint>Explain recommendations clearly</constraint>

</constraints>

<output_format>

<findings>

<security></security>

<performance></performance>

</findings>

</output_format>

</agent_task>

This pattern is increasingly common in agentic AI systems, orchestration frameworks, and evaluation pipelines.

Practical Guidance: When to Use XML Prompts

Use XML-style prompts when:

Prompts exceed a few paragraphs

Outputs must be machine-consumable

Prompts are dynamically generated

You are building agents or workflows

Safety and injection resistance matter

Avoid XML when:

You are doing quick exploratory prompting

The task is trivial and short-lived

Conclusion: XML Is Not Old — It’s Mature

XML’s resurgence in prompt engineering is not nostalgia — it’s a necessity now.

As prompts become:

Longer

Dynamically generated

Embedded in production systems

…structure becomes non-negotiable.

XML provides:

Clarity

Safety

Consistency

Composability

In a world where LLMs are becoming core infrastructure, XML-style prompting is less about syntax and more about engineering discipline.