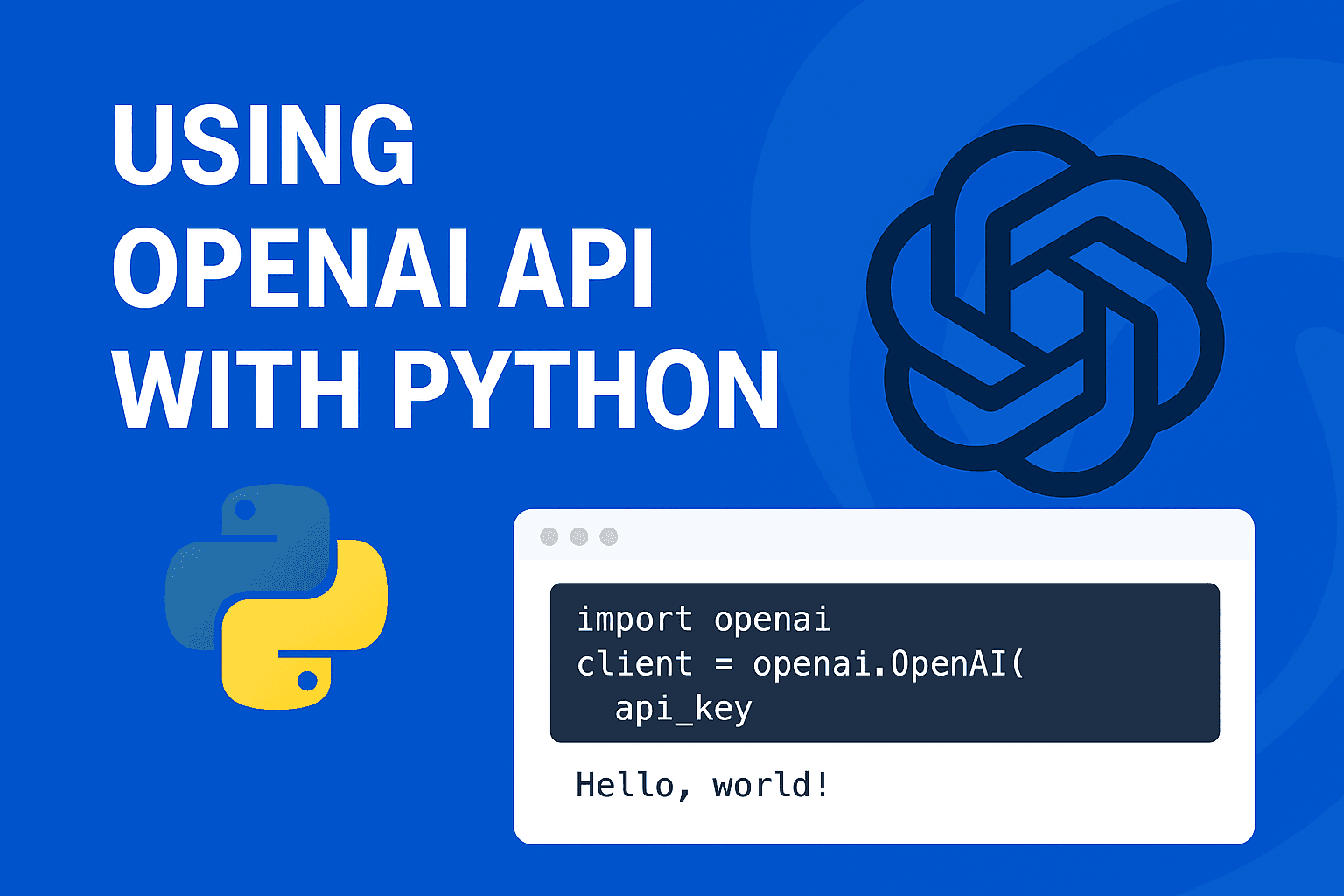

Getting Started with OpenAI API in Python: A Step-by-Step Guide

From Zero to Hero: A Beginner's Guide to Using the OpenAI API with Python

I’m Siddhesh, a Microsoft Certified Trainer, cloud architect, and AI practitioner focused on helping developers and organizations adopt AI effectively. As a Pluralsight instructor and speaker, I design and deliver hands-on AI enablement programs covering Generative AI, Agentic AI, Azure AI, and modern cloud architectures.

With a strong foundation in Microsoft .NET and Azure, my work today centers on building real-world AI solutions, agentic workflows, and developer productivity using AI-assisted tools. I share practical insights through workshops, conference talks, online courses, blogs, newsletters, and YouTube—bridging the gap between AI concepts and production-ready implementations.

Introduction

Artificial Intelligence (AI) is rapidly transforming how we work, learn, and create. Among the most powerful tools in this space are large language models (LLMs) like OpenAI’s GPT models, which can generate, summarize, and analyze text with human-like fluency.

If you’re a developer, data scientist, or AI enthusiast, learning how to integrate the OpenAI API into your Python projects is a valuable skill. Whether you’re building a chatbot, automating document summarization, or experimenting with structured data extraction, Python makes it easy to get started.

In this guide, we’ll walk through:

Setting up your Python environment

Installing necessary libraries

Using the OpenAI API for text summarization

Understanding how chat roles work

Generating structured outputs (bullet points, JSON, custom formats)

Let’s dive in

Part 1: Setting Up Your Workspace

First things first, let's get your development environment ready. This involves getting Python installed, setting up a dedicated project folder, and securing your API key.

Prerequisites

Python 3.7.1 or newer: If you don't have it, you can download it from the official Python website.

A terminal or command prompt.

An internet connection.

Step 1: Get Your OpenAI API Key 🔑

Your API key is your secret password to access OpenAI's models.

Go to the OpenAI Platform and create an account or log in.

Navigate to the API Keys section in the dashboard.

Click "Create new secret key." Give it a name you'll recognize (e.g., "PythonProjectKey").

Important: Copy the key immediately and save it somewhere secure, like a password manager. You will not be able to see it again after you close the window.

Step 2: Create and Configure Your Python Project

It's a best practice to create a dedicated folder and a virtual environment for each project. This keeps dependencies isolated and your projects tidy.

Open your terminal and run these commands to create and enter a new project folder:

mkdir llm-api-demo cd llm-api-demoCreate a virtual environment named

venv:python -m venv venvActivate the virtual environment. The command differs based on your operating system:

On macOS/Linux:

source venv/bin/activateOn Windows:

venv\Scripts\activate

You'll know it's active when you see (venv) at the beginning of your terminal prompt.

With the virtual environment active, install the necessary Python libraries:

pip install openai python-dotenv jupyteropenai: The official Python library for interacting with the OpenAI API.python-dotenv: A handy tool to manage environment variables, which is how we'll protect our API key.jupyter: An interactive coding environment perfect for experimenting.

Create a file named

.envin yourllm-api-demoproject folder. This file will securely store your API key. Add the key you saved earlier to this file:OPENAI_API_KEY=sk-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx🔒 Security Note: Never share your

.envfile or commit it to a public repository like GitHub. If you use Git, add.envto your.gitignorefile.

Part 2: Making Your First API Call

Now for the exciting part! We'll use a Jupyter Notebook to write and run our Python code interactively.

In your terminal (with the virtual environment still active), start Jupyter:

jupyter notebookThis will open a new tab in your web browser.

Click "New" and select "Python 3 (ipykernel)" to create a new notebook.

In the first cell of the notebook, enter the following code to summarize a piece of text.

# Step 1: Import libraries and load the API key

from openai import OpenAI

import os

from dotenv import load_dotenv

load_dotenv()

# Step 2: Initialize the OpenAI client

# The library automatically looks for the OPENAI_API_KEY in your environment

client = OpenAI()

# Step 3: Define the text and make the API call

input_text = """

Large language models (LLMs) are a type of artificial intelligence that can

generate human-like text based on the input they receive. These models are

trained on massive datasets and can perform a wide range of language tasks,

such as translation, summarization, and question answering. However, they also

come with challenges like hallucination, bias, and the need for large amounts

of computational power.

"""

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{"role": "system", "content": "You are a helpful assistant that summarizes text."},

{"role": "user", "content": f"Summarize this:\n\n{input_text}"}

],

temperature=0.5

)

# Step 4: Print the result

print(response.choices[0].message.content)

Run the cell by pressing Shift + Enter. In a few moments, you should see a concise summary of the input_text printed below!

Part 3: Anatomy of an API Call

Let's break down the key parameters in that client.chat.completions.create call to understand what's happening.

Model

The model parameter specifies which OpenAI model you want to use. We used "gpt-4o-mini", a fantastic new model that balances high intelligence with great speed and affordability. You can explore other models on the OpenAI Models page.

Messages & Roles

The messages parameter is a list that forms the conversation. Each message is a dictionary with a role and content.

system: This sets the stage. It gives the AI its instructions or persona for the entire conversation. Think of it as the director telling the actor how to behave. It's often the first message.user: This is your input—the question or command you are giving the model.assistant: This role holds the model's previous responses. You use it to build multi-turn conversations, providing the AI with the chat history so it has context.

Temperature

The temperature parameter controls the randomness of the output. It ranges from 0 to 2.

A lower value (e.g.,

0.2) makes the output more deterministic and focused—good for factual tasks like summarization or code generation.A higher value (e.g.,

0.8) makes the output more creative and random—great for brainstorming or writing stories.

Part 4: Advanced Magic - Getting Structured Output

Sometimes you don't just want plain text; you need data in a predictable format like JSON or a bulleted list. This is crucial for building applications where you need to parse the model's output.

The Easy Way: Prompt Engineering

You can often get a structured output just by asking for it in your prompt.

Bullet Point Summary

To get a bulleted list, simply adjust your system and user messages.

response_bullets = client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{"role": "system", "content": "You are a helpful assistant that summarizes text into bullet points."},

{"role": "user", "content": f"Summarize the following text into 3-5 concise bullet points:\n\n{input_text}"}

],

temperature=0.4

)

print(response_bullets.choices[0].message.content)

The Robust Way: JSON Mode

For applications that need guaranteed, machine-readable output, asking in the prompt can sometimes fail. A much more reliable method is to use JSON Mode. By adding one parameter, you can force the model to return a valid JSON object.

Let's ask the model for a summary and a list of key points in a structured JSON format.

response_json = client.chat.completions.create(

model="gpt-4o-mini",

# Add this parameter to enable JSON Mode

response_format={ "type": "json_object" },

messages=[

{"role": "system", "content": "You are a helpful assistant that returns summaries in a valid JSON format."},

{"role": "user", "content": f"Summarize the text. Return a JSON object with a 'summary' field and a 'key_points' array of strings.\n\nText:\n{input_text}"}

],

temperature=0.4

)

print(response_json.choices[0].message.content)

Now the output will be a clean, parsable JSON string, perfect for integrating into a larger application.

Next Steps

With this foundation, you can now:

Build chatbots with context retention

Create document summarizers

Extract structured insights (entities, sentiment, action items)

Integrate LLMs into apps, dashboards, or workflows

Explore more in the official OpenAI API documentation.

Conclusion

Learning how to use the OpenAI API with Python unlocks endless possibilities — from automating tedious tasks to building intelligent assistants.

By setting up a secure Python environment, managing your API key properly, and experimenting with structured outputs, you’ll be well on your way to building AI-powered applications.

The AI revolution is here, and Python + OpenAI makes it easier than ever to be a part of it.