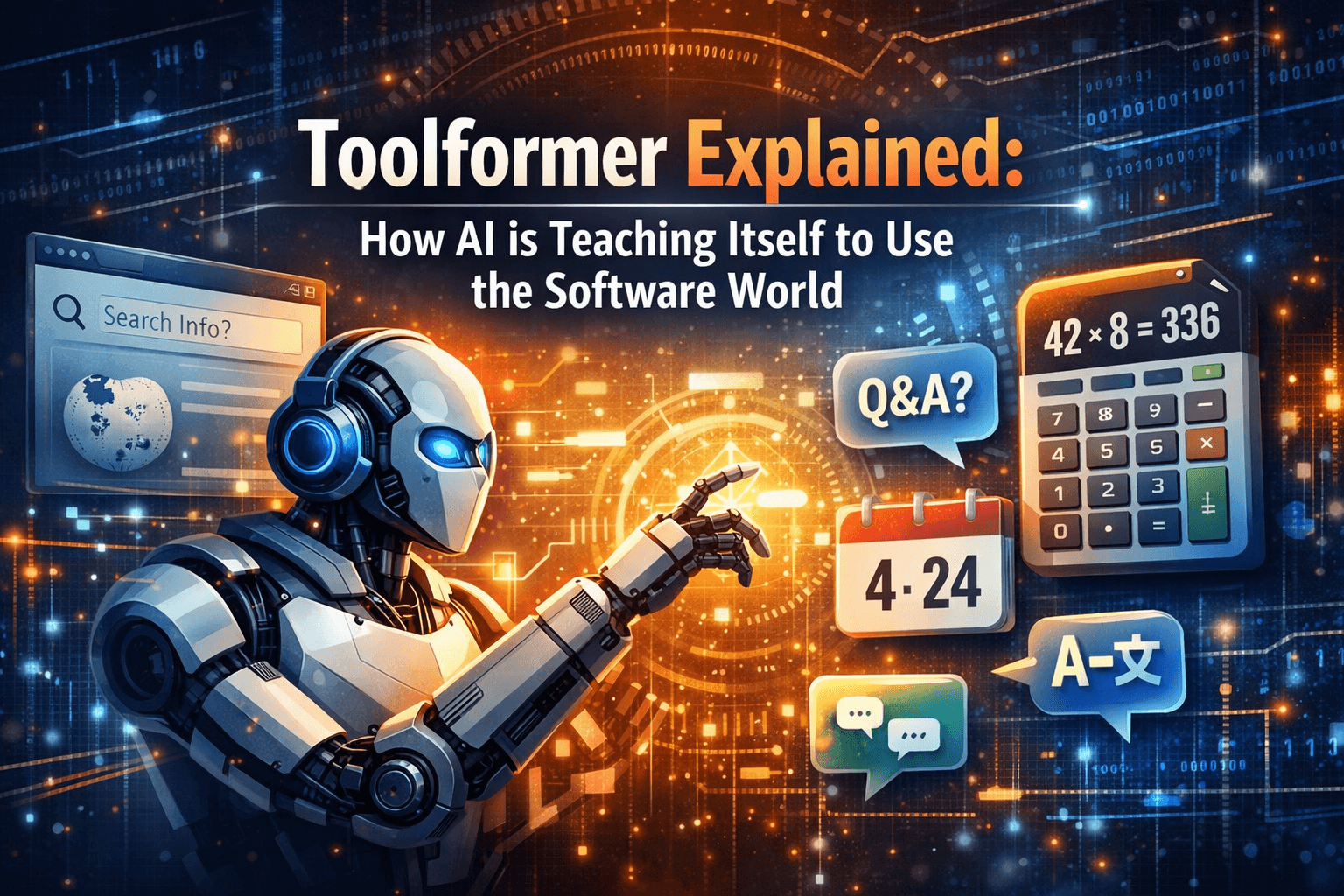

Toolformer Explained: How AI is Teaching Itself to Use the Software World

I’m Siddhesh, a Microsoft Certified Trainer, cloud architect, and AI practitioner focused on helping developers and organizations adopt AI effectively. As a Pluralsight instructor and speaker, I design and deliver hands-on AI enablement programs covering Generative AI, Agentic AI, Azure AI, and modern cloud architectures.

With a strong foundation in Microsoft .NET and Azure, my work today centers on building real-world AI solutions, agentic workflows, and developer productivity using AI-assisted tools. I share practical insights through workshops, conference talks, online courses, blogs, newsletters, and YouTube—bridging the gap between AI concepts and production-ready implementations.

Introduction

We are living in a strange era of AI where a model can write a convincing Shakespearean sonnet in seconds but might confidently tell you that 42 multiplied by 8 is 350.

This "paradox of competence" happens because Large Language Models (LLMs) are trapped inside their own training data. They don't know facts; they only know the statistical probability of words. But what if an LLM could cheat? What if, instead of guessing the answer, it could just open a calculator or a web browser?

That’s the premise behind Toolformer, a groundbreaking paper from Meta AI. It introduces a method for models to teach themselves how to use external software tools, bridging the gap between creative generation and factual accuracy.

The Big Idea: Self-Taught Tool Use

The genius of Toolformer isn't just that it uses tools—we've had systems that do that before. The breakthrough is how it learns.

Traditionally, if you wanted an AI to use a calculator, humans had to painstakingly label thousands of examples (e.g., "User asks 2+2, Model should call Calculator"). This is slow and expensive.

Toolformer flips this script using a clever self-supervised loop:

Guess: The model reads a text and randomly tries to insert a tool call (like a search query) in the middle of a sentence.

Execute: It actually runs the tool and gets a result.

Judge: It checks: Did seeing this result make it easier to predict the rest of the sentence?

Learn: If the answer is "Yes," the model teaches itself that this was a good time to use a tool. If "No," it discards the attempt.

Key Findings: Small Model, Big Results

The results were startling. The researchers used a relatively small model (GPT-J with 6.7 billion parameters) and trained it to be a Toolformer.

David vs. Goliath: On benchmarks involving math and factual questions, this 6.7B model outperformed the massive GPT-3 (175B parameters).

Versatility: The model successfully learned to use a calculator, a Q&A system, a Wikipedia search, a translation app, and a calendar—all without explicit human instruction for specific cases.

Precision: By offloading math to a calculator, the model eliminated the "arithmetic hallucinations" common in standard LLMs.

Technical Implementation: The "Filtering" Trick

For the developers reading this, the core algorithm relies on a specific loss-filtering mechanism.

The model generates a dataset $C$ of potential API calls. For a given position in the text $i$, it compares two losses:

$L_{min}$: The loss (uncertainty) of predicting the next tokens without the tool.

$L_{tool}$: The loss of predicting the next tokens given the tool's output.

If $L_{min} - L_{tool}$ is greater than a certain threshold, it means the tool provided "surprisal reduction"—it made the future predictable. The model essentially says, "I wouldn't have guessed the next word correctly unless I saw this search result." These high-value examples are then used to fine-tune the model.

Conclusion

Toolformer represents a shift from "Know-It-All" models to "Know-How-To-Ask" models. By giving AI the ability to acknowledge its own limitations and reach for a tool, we move closer to systems that are not just creative, but also factually grounded and reliable.

As we look forward, the question isn't just what AI can learn, but what software we can build for AI to use. If an AI can teach itself to use a calculator today, what happens when it teaches itself to use your IDE tomorrow?

Relevant Video:

Timo Schick | Toolformer Presentation

This video features Timo Schick, the lead author of the Toolformer paper, explaining the technical details of how the model learns to filter API calls and improve its zero-shot performance.